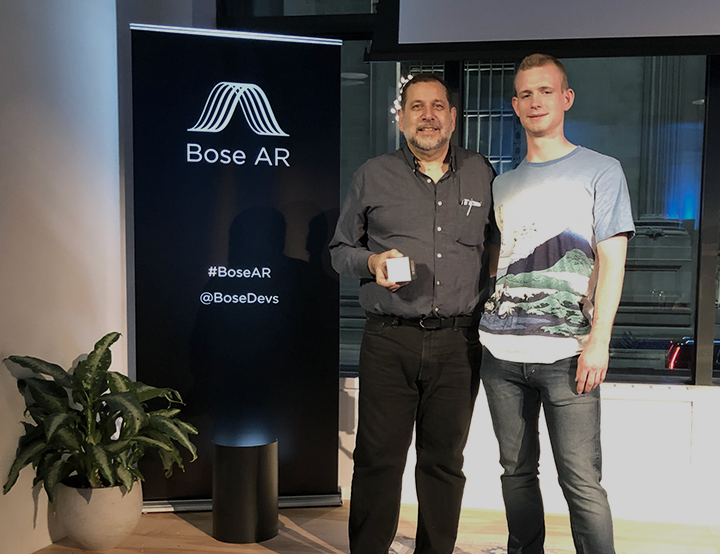

Evolving Technologies Corporation - Grand Prize Winner in the Bose AR @ Company NYC Pitch Challenge6/15/2019

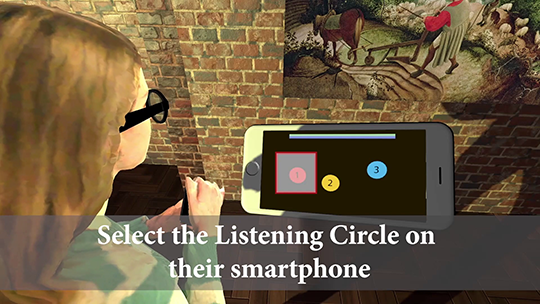

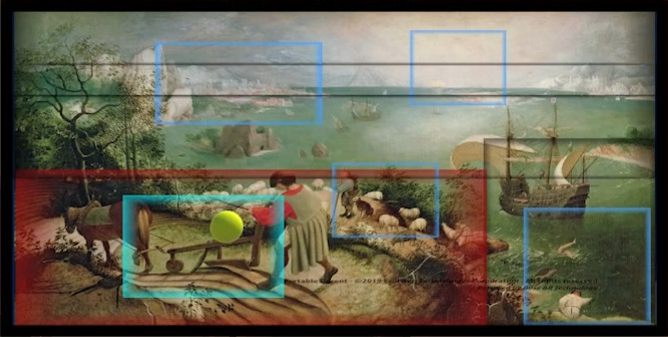

Recently, Bose (the same people known for their noise cancellation headphones) and Company.co (formerly Grand Central Tech) partnered to sponsor a competition for the most innovative and promising apps for the Bose AR Frames. We are pleased to announce that we are a grand prize winner in this competition. Josh Pratt and I developed Portable Docent - a Unity app that combines spatial awareness and dynamic audio content to create an immersive and responsive audio experience for museum visitors. It is built using Bose AR technology. Through some fancy algorithms the app accurately tracks the portions of a painting a museum visitor is gazing at, and dynamically orchestrate ambient soundscapes and voice overs to create a rich immersive audio experience. About the AR FramesUnlike most augmented reality devices, the Bose AR Frames omit any augmented vision features. So, what in the world makes these glasses special? For one thing, they tag team with your (Android or IOS) phone. Having the phone in constant contact with the Frames opens the door to numerous kinds of uses. The Frames have speakers that project high quality sound into your ears. There's a built-in microphone. You can make and receive calls through the frames. They respond to gestures like double-tapping on the side of the frame, gesturing with a Yes motion head nod, as well as shaking your head from side to side. Aside from a long lasting battery, the Frames have accelerators, gyroscopes, and magnetic compass. The Frames easily fit over regular eyeglasses. They are designed for long lasting comfort. Oh, just one more thing ... Bose has wisely elected to incorporate the same kind of hardware sensors in the Quiet Comfort QC35 II noise cancelling headphone as well as every new headphone, like the new Bose 700. The implications of this are enormous, as there already are millions of AR capable headphones out there. And what is the cost to the Bose customer who already has an AR capable headphone? Zippo. Nada. Nothing. This translates to easy adoption and use. How the App StartedThe requirements for our app- Portable Docent is relatively straightforward in design, but not so simple from an implementation standpoint. The app has to make it easy for someone who has the Bose frames to walk up to a museum painting, look at different parts of the painting and be immersed in ambient/atmospheric soundscapes as well as listen to voice overs based on the portions of the painting that you're gazing at. To make this work seamlessly requires a bit of ingenuity. The Bose Frames can accurately track orientation; so they know how much you are looking up, down, turning left or right. They don't know your physical location. We addressed this from a number of approaches including the use of bluetooth beacons, and using the Kinect. The challenge with these is that the setup is far too complicated. And using the Kinect there are limitations on the number of people that could be simultaneously tracked, occlusion issues, and more. We opted instead to go for a simpler approach that involves identifying a few reference points, and from there everything is automatically triangulated. No fuss. No muss. You just put on the glasses and enjoy. An early rig for projecting paintings of various sizes CalibrationWe developed two methods of calibration. One entails a set of geometrical computations and measurements so that a museum visitor can enter their approximate height when first starting the Portable Docent app, step inside a "Listening Circle" on the floor, tap to select the Listening Circle they are in and immediately begin the audio enhanced painting experience.. Our second calibration method- Quick Mode literally takes under 10 seconds to calibrate to any painting from any viewing location. Audio EnvironmentWe have two kinds of regions, voice overs and ambient soundscapes. These region can be positioned and sized to create the desired audio experience. Aural information is a natural part of our environment, but audio information delivered on a device is usually separate from what we see. Why not let the audio curate the visual? It's exciting to see where this technology is heading. Clearly, there are some areas where we're breaking new ground.

In recent months I've been doing a lot with drones. I have my FAA (Part 107) Drone Pilot License and am creating software tools for aerial photogrammetry. At some point I'll post an entry about my serious work with drones, but in this post I want to write about something much more fun; having to do with controlling an indoor drone with Magic Leap purely through hand gestures.

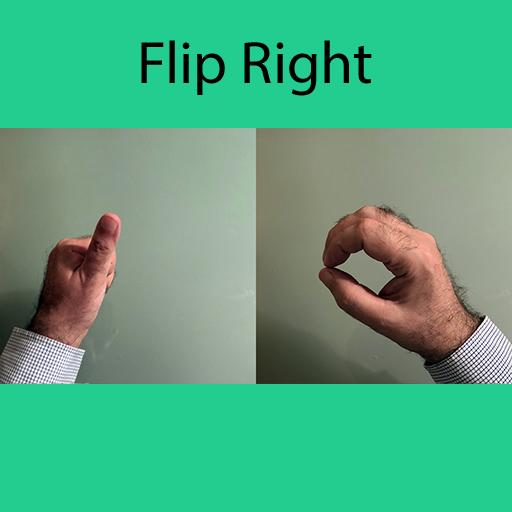

A couple of months ago there was a hackathon (the Unity/NYC XR Jam, for which my entry was awarded the best AR application) that took place at R-Lab in the Brooklyn Navy Yard. During that weekend I built a Magic Leap application with Unity to pilot a drone purely through 14 hand gestures like the one shown here:

The drone used is an educational drone primarily designed for indoor use that is known as the Tello Drone (manufactured by Ryze).

The Tello costs about $100. The drone is remarkable for its price. If you want to start playing with drones for recreation this would be a great first experience. Most significantly from my standpoint, it has a well defined SDK.

In any case, here is a video showing the drone in action purely managed through hand gestures. |

AuthorLoren Abdulezer Archives

July 2019

Categories |

Copyright © 2022 Evolving Technologies Corporation

RSS Feed

RSS Feed